Abstract

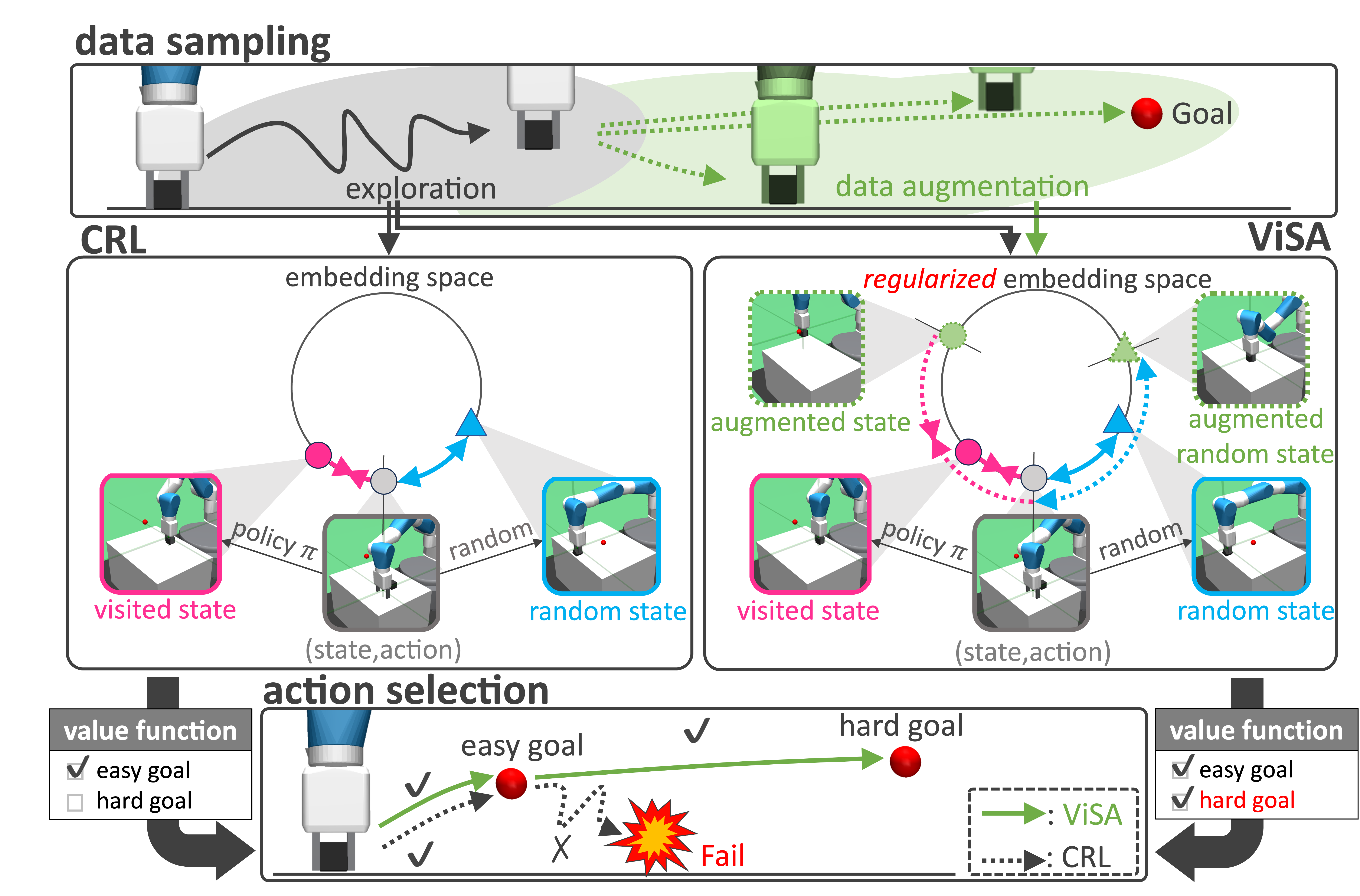

Goal-Conditioned Reinforcement Learning (GCRL) is a framework for learning a policy that can reach arbitrarily given goals. In particular, Contrastive Reinforcement Learning (CRL) provides a framework for policy updates using an approximation of the value function estimated via Contrastive Learning, achieving higher sample efficiency compared to conventional methods. However, because CRL treats the visited state as a pseudo-goal during learning, it can accurately estimate the value function only for limited goals. To address the issue, we propose a novel data augmentation approach for CRL called ViSA (Visited-State Augmentation). ViSA consists of two components: 1) generating augmented state samples to augment hard-to-visit state samples during on-policy exploration, and 2) learning consistent embedding space, which uses augmented state as auxiliary information to regularize the embedding space, by reformulating the objective function of the embedding space based on Mutual Information. We evaluate ViSA in simulation and real-world robotic tasks and show improved goal-space generalization, enabling accurate value estimation

Overview

We artificially augment visited states (augmented states) that are inherently reachable under current policy but insufficiently visited in limited on-policy rollouts. In addition, instead of using augmented states merely as additional visited states, we leverage them as auxiliary information to regularize the embedding space, encouraging it to jointly calibrate relative distances to both rarely visited and originally visited states. As a result, this prevents overfitting to the limited visited states which ware obtained from on-policy rollouts.

Simulation experiments

Common experiment settings

To confirm that our method improves the learning performance of CRL across a variety of robotic tasks, we conducted simulation-based learning on several robotic tasks and compared task performance. As comparison methods, we used the previous CRL method and several goal-conditioned learning baselines. Each method was learned for three trials. On this page, we present demonstrations comparing the proposed method with the previous CRL method.

Demonstration

Fetch push

A task in which a manipulator pushes a box to a target position on the table. The initial positions of the end-effector and the box, as well as the goal positions, were uniformly randomized.

Pick & Place

A task in which a manipulator grasps a box and transports it to a target position on the table or in the air. The initial positions of the end-effector and the box, as well as the goal positions, were uniformly randomized.

Robel Turn

A task in which a three-fingered robotic hand rotates a valve to an arbitrary target angle. The initial valve positions, robot fingertips, and goals were uniformly randomized.

Robel Screw

A task in which the robot rotates a valve by half a turn at a constant speed.

Real-world Experiment

To evaluate the effectiveness of our method for real-world robot learning, we conducted comparative experiments against previous CRL method using the Robel robot. Each task was learned under the same settings as in the simulation experiment. In addtion, for Robel Turn which has a large exploration-space, we initialized the policy with a network trained in simulation and fine-tuned it on the real robot. For Robel Screw, we learns the policy with full scratch.

Robel Turn

Robel Screw

Analysis of Mutual Information Estimation Accuracy

Setup

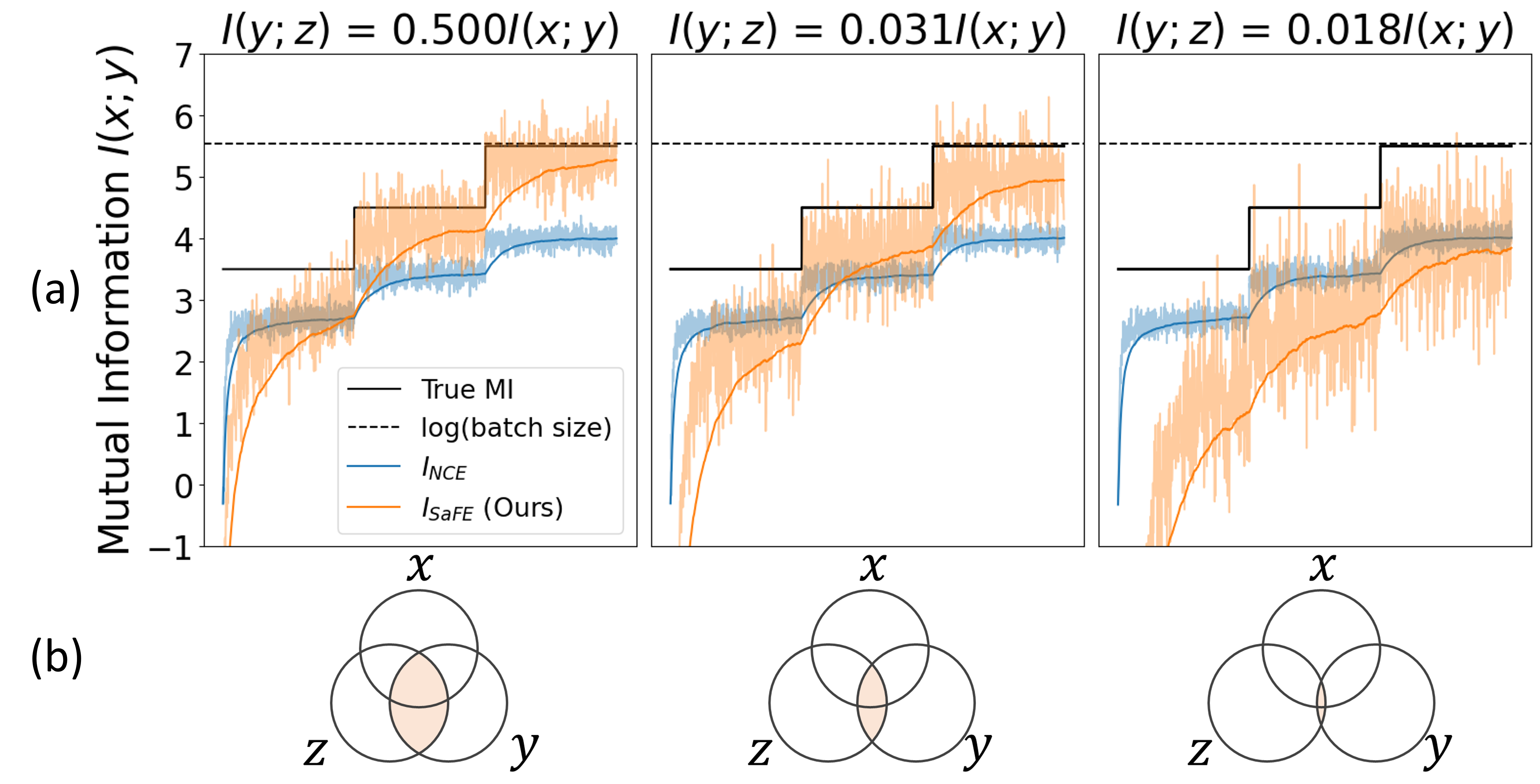

Learning the embedding space in CRL can be viewed as a mutual information (MI) estimation problem, since the objective is to maximize InfoNCE loss. To evaluate whether the factorized MI estimation using in ViSA contributes to improving accuracy, we compared the errors between ground-truth and estimated MI values using synthetic datasets.

We simulated the sample bias in CRL using a key property of Gaussian distributions, where samples concentrate near the mean and few samples occur far from it. Specifically, three variables corresponding to anchor, visited state, and augmented state are generated via linear transformations and added Gaussian noise :

Here, is the identity matrix. To preferentially augment hard-to-sample samples, we set and generated from a higher-variance Gaussian. Mini-batch size was set to 256, and was varied so that the ground-truth MI takes {3.5, 4.5, 5.5}, validating estimator generality. For a fair comparison, the total number of and samples matched the number of samples in CRL. To validate the effect of augmented state relevance on MI estimation, we varied so that corresponds to {1/2, 1/32, 1/55} of , as shown in the bellow figure (b).

Results

The MI analysis results are shown in the bellow figure (a). Across different MI values, our method (orange line) estimates MI closer to the ground truth (black line) than the conventional estimator , since augmented states supplement hard-to-sample data and regularize the embedding space. Consequently, when augmented states are properly related to visited states, MI estimation is more accurate.

Detail of learning settings

The details of the learning settings used in the experiments of this study are presented in the table below. In the actor network, two types of activation functions are employed: 1) NormalArcTangent is used only in the final layer, while 2) ReLU function is primarily applied throughout the network. Furthermore, to enable parallel learning, the actor operates with four instances in parallel during exploration. However, for tasks using the Robel robot, parallel learning is not performed, and training is conducted using only a single instance.

| Learning Setting | Value |

|---|---|

| number of Actor | 4 1 (When Robel Turn task and Robel Screw task) |

| batch size | 128 |

| learning rate | 3e-4 |

| discount | 0.99 |

| gradient updates to perform per step | 32 |

| actor target entropy | 0 |

| hidden layers sizes | (256, 256) |

| initial random data collection | 10,000 transitions |

| replay buffer size | 1,000,000 transitions |

| samples per insert | 256 |

| train-collect interval | 16 |

| representation dimension | 64 |

| activation function(critic) | ReLU |

| activation function(actor) | ReLU NormalArcTagent |